LLMs, Agents, and Workflows—What’s the Difference?

An AI breakdown that actually makes sense.

LLMs, Agents, Workflows, and RAG—What’s the Difference?

If you’ve been trying to build with AI, you’ve probably run into a mess of confusing terms.

LLMs, AI agents, agentic workflows, RAG, data pipelines—it all sounds important, but what does it actually mean when you’re building something?

Let’s break it down in a way that makes sense.

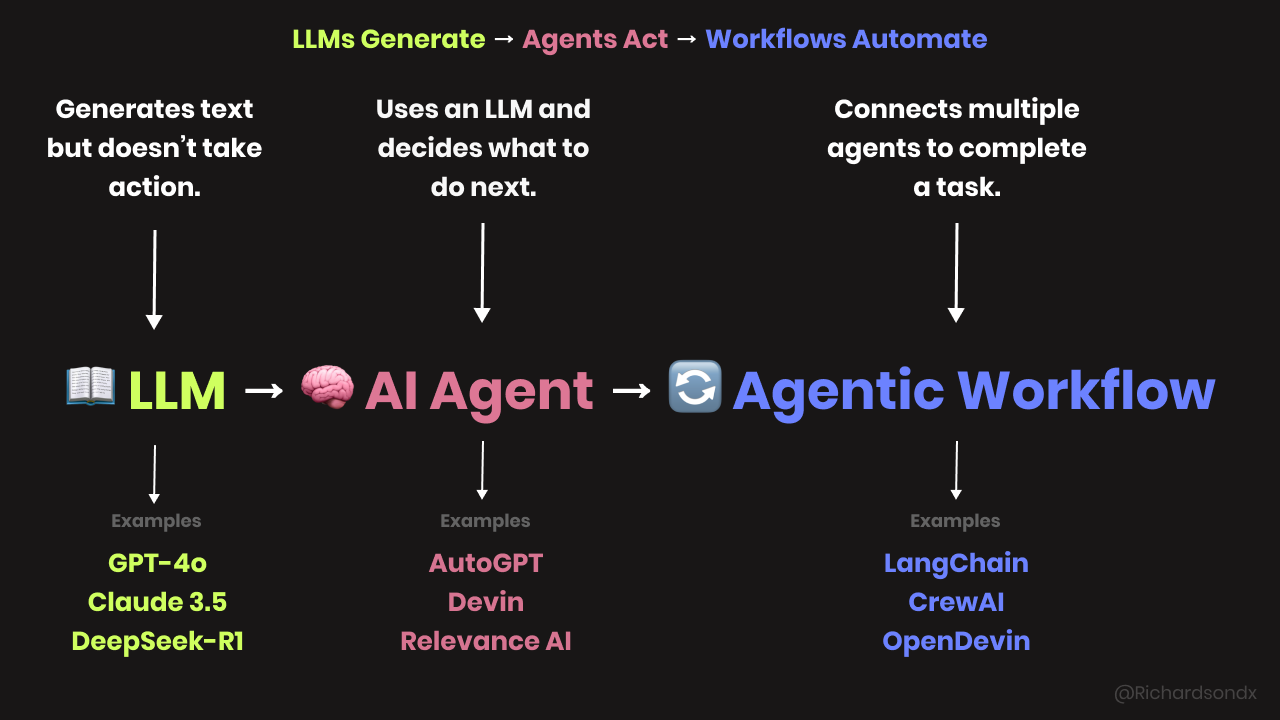

LLM vs. AI Agent vs. AI Model

Think of an AI model as the big category. It’s any system that processes data and generates an output. Some models predict stock prices, some recognize images, and some, like LLMs, generate text.

LLMs (Large Language Models) are just one type of AI model. They’re trained on massive amounts of text and can generate responses based on patterns in that data. But they don’t "think" or make decisions.

Some well-known LLMs: GPT-4o, DeepSeek-R1, Claude 3.5 Sonnet, Gemini 1.5 Pro, Mistral-7B.

An AI agent is what happens when you take an LLM and give it the ability to act. It decides what to do based on the information it has.

Say you want a meal plan for the week:

An LLM (like GPT-4o) can generate the plan.

An AI agent can take that plan, order the groceries, and schedule deliveries.

One generates text. The other makes decisions. That’s the difference.

AI Agent vs. Agentic Workflow vs. Data Pipeline

An AI agent makes a single decision at a time.

An agentic workflow connects multiple decisions into a process.

A data pipeline moves information between tools but doesn’t make decisions.

Think about running a pizza delivery business:

An AI agent is like your pizza chef—it decides when to start cooking based on the number of orders.

An agentic workflow is like your whole kitchen staff working together. The chef makes the pizza, the cashier handles payments, and the driver delivers it—all happening in steps.

A data pipeline is like the conveyor belt moving ingredients to the chef. It doesn’t decide what to cook, just moves things where they need to go.

If you’re automating tasks, Zapier and n8n act as pipelines—they connect apps and move data, but they don’t "think."

Relevance AI acts more like an agent, because it makes decisions based on the data it’s processing.

RAG vs. Fine-Tuning vs. Prompt Engineering

Let’s say you need to study for an exam. You’ve got a few ways to prepare:

RAG (Retrieval-Augmented Generation): You read your textbook but also check the latest research online.

Fine-tuning: You rewrite your textbook to focus only on the topics you care about.

Prompt engineering: You highlight key sections and add notes so you can quickly find answers.

RAG helps when your LLM doesn’t know the latest information. Instead of guessing, it pulls real-time data from sources like a company database, news articles, or API calls.

Fine-tuning works when you need the model to specialize in a certain task. If you're building a legal assistant, you’d fine-tune an LLM on case law.

Prompt engineering is the fastest way to get better results without changing the model. You tweak the input so the AI gives you more useful output.

When should you use each?

If your app needs real-time or external data → Use RAG.

If you need a model trained on your specific knowledge → Use fine-tuning.

If you want better responses without retraining → Use prompt engineering.

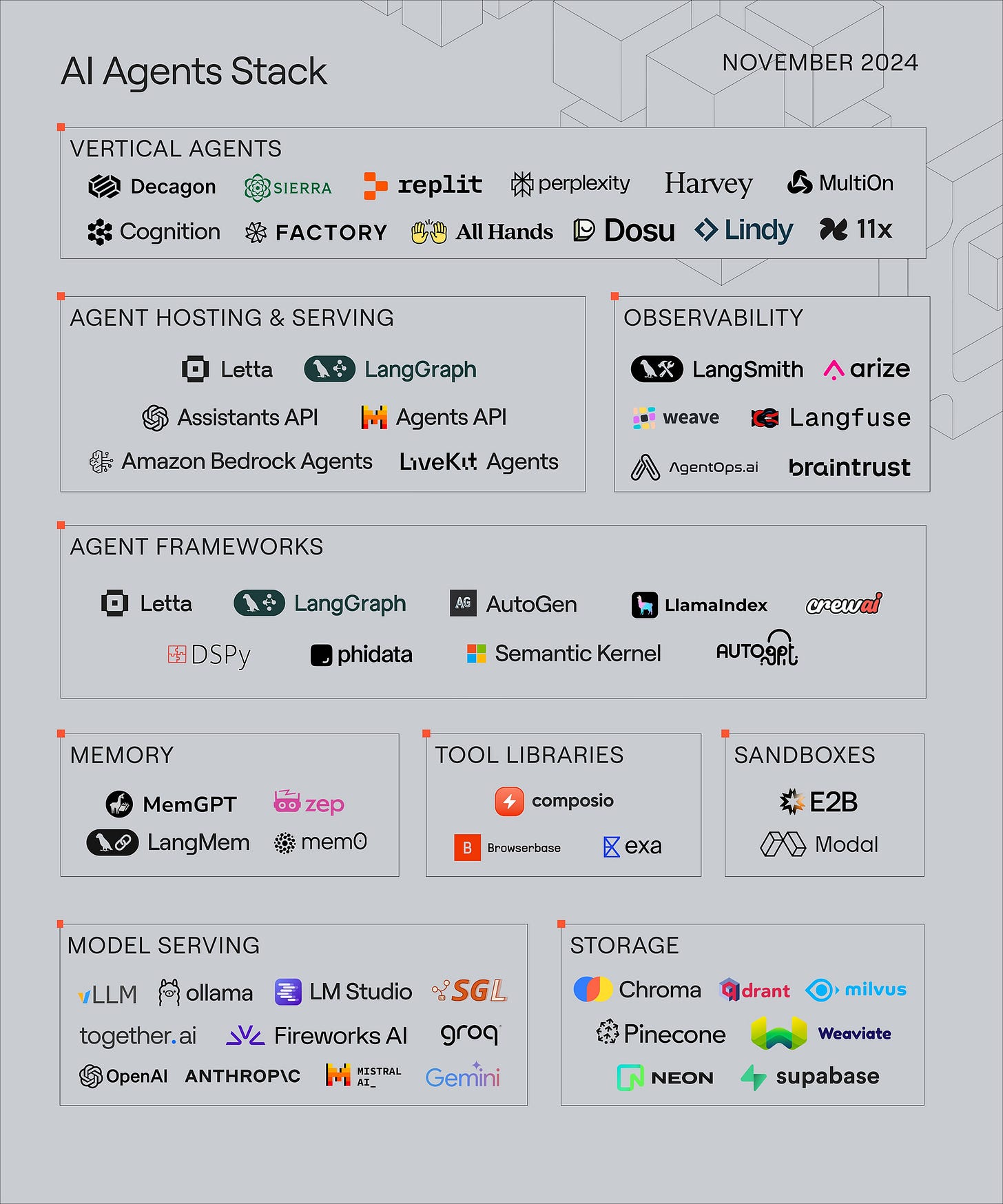

AI Model vs. API vs. Framework (LangChain, LlamaIndex, etc.)

Let’s say you want to build an AI-powered chatbot. You need three things:

An AI model (the brain) → This is the LLM that generates responses.

An API (the remote control) → This lets your app send questions to the LLM and get responses.

A framework (the toolkit) → This connects everything so your chatbot can remember past conversations, pull in external data, and run efficiently.

LangChain and LlamaIndex help manage the workflow between the model, memory, and external tools.

If you're just making simple API calls to OpenAI or Claude, you don’t need a framework. If you’re building a complex AI assistant with multiple steps, a framework makes things easier.

Bringing It All Together (How to Use This to Build an AI App)

Let’s say you’re building an AI-powered research assistant. You want it to answer questions based on company data and external sources.

Use an LLM (GPT-4o, Claude 3.5) to generate responses.

Add RAG so it pulls live data from reports and documents.

Use an AI agent to decide what information to retrieve and how to structure the response.

Set up an agentic workflow so the assistant can follow multiple steps (e.g., summarize data, create a report, and email it).

Build a data pipeline to move information between your CRM, research database, and email system.

Use APIs to connect everything, and a framework (LangChain, LlamaIndex) to manage the workflow.

Now you’ve got a research assistant that is pulling in real data, making decisions, and automating tasks.

That’s how all these pieces fit together.

You can read a full breakdown about this stack here: The AI agents stack

What I’ve Been Up To

Launched GitGlance on Product Hunt this week.

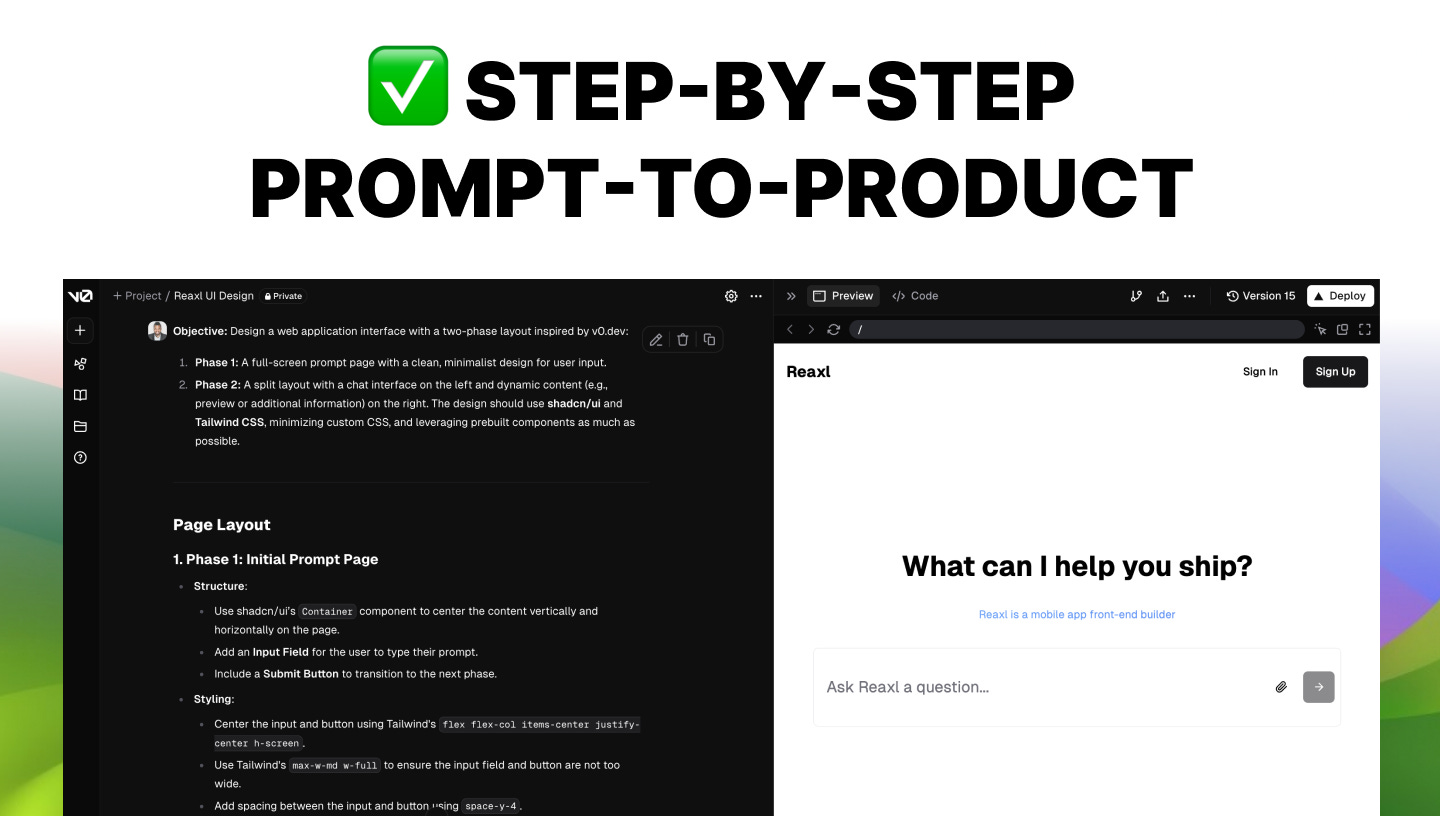

Rebranded SaaSpreneur Academy to AI Founders Club. The community naturally shifted toward AI startups, and this aligns better with what we’re building. If you want to see my full breakdowns of AI products, prompts, and code, join here. One of the courses I’m really excited about are courses in my Prompt-to-Product series that show you exactly how I go from ideas to having a working product using AI to code.

Got access to Claude Code. It’s great for writing tests and fixing bugs. Running an AI agent autonomously isn’t cheap, which explains why Devin costs $500. More on that in this video.

Testing new sales software. Telescope feels like Tinder for finding customers, and I’m diving deeper today. I’ll document my thought on each of them on X. You can follow it here.

SaaS email is expensive. I even made a course on it. But I felt that there was still a lot of things I needed to cover. So this week I’ve decide to make a full course on how I setup emails for my SaaS while keeping the cost low. That full guide will be available in the AI Founders Club.

More next week.

Learn it to make it!

Cheers ✌🏾

- Richardson